The U.S. government’s break with Anthropic revealed that there are no consistent rules governing artificial intelligence, but a bipartisan coalition of thinkers has put together something the government has so far refused to create: a framework for what responsible AI development should actually look like.

Although the pro-human declaration was finalized before last week’s standoff between the Pentagon and humanity, the collision of the two events was not forgotten by all involved.

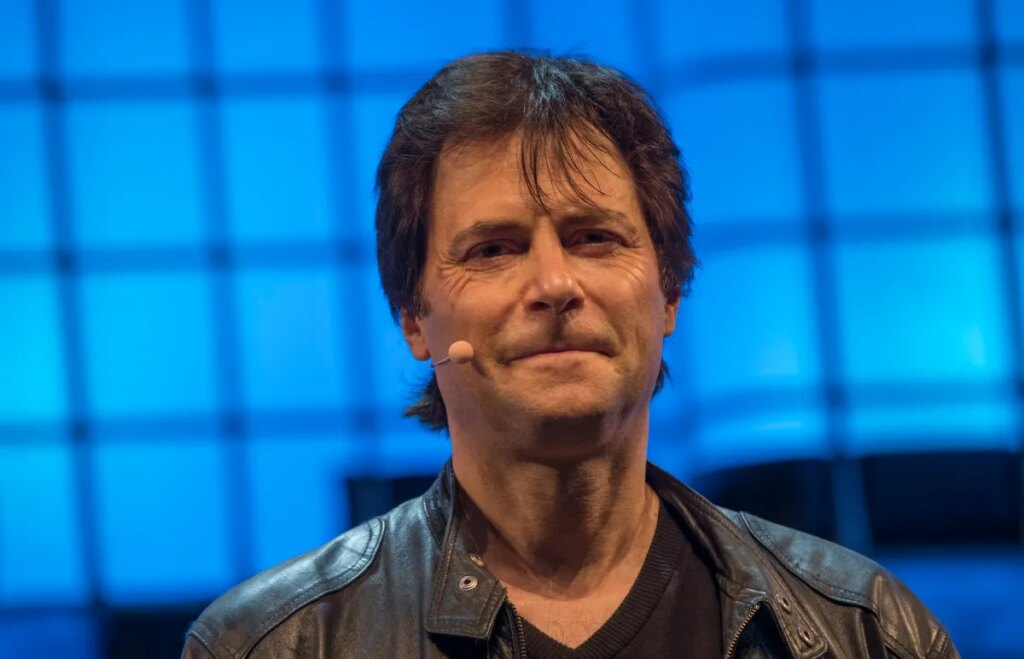

“Something very remarkable has happened in America over the last four months,” Max Tegmark, an MIT physicist and AI researcher who helped organize the effort, said in a conversation with the editors. “Suddenly, polls reveal that 95% of all Americans oppose unregulated competition to superintelligence.”

A newly released document signed by hundreds of experts, former government officials and celebrities begins with the blunt observation that humanity is at a crossroads. One path, which the Declaration calls “competition of substitution”, leads to the replacement of humans first as workers and then as decision-makers, as power accrues to unaccountable organizations and their machines. The other will lead to AI that will greatly expand human potential.

The latter scenario relies on five key pillars: holding humans accountable, avoiding the concentration of power, protecting the human experience, protecting individual freedom, and holding AI companies legally accountable. Among its stronger provisions is a complete ban on superintelligence development until there is a scientific consensus that it is safe and capable of genuine democratic buy-in. Powerful systems require an off switch. and a prohibition on architectures capable of self-replication, autonomous self-improvement, or shutdown tolerance.

The publication of this declaration coincides with a time when its urgency is more easily understood. On the last Friday in February, Secretary of Defense Pete Hegseth designated Anthropic, whose AI is already running on classified military platforms, as a “supply chain risk.” The company denied the Pentagon permission to use the technology unrestricted, usually given to companies with ties to China. Hours later, OpenAI terminated its own agreement with the Department of Defense, which legal experts say will be difficult to enforce in any meaningful way. This reveals just how costly Congressional inaction on AI has become.

Dean Ball, a senior fellow at the American Foundation for Innovation, later told the New York Times: “This is not just a fight over a contract. This is the first conversation we’ve had as a nation about managing our AI systems.”

tech crunch event

San Francisco, California

|

October 13-15, 2026

Tegmark came up with an analogy that most people understood when we talked about it. “We don’t have to worry that some drug company is going to come out with another drug that does tremendous harm before people figure out how to make that drug safe, because the FDA won’t allow anything to come out until it’s safe enough,” he said.

Turf wars in Washington rarely generate enough public pressure to change the law. Rather, Tegmark believes that child safety is the most likely solution to the current impasse. In fact, the declaration calls for mandatory pre-deployment testing of AI products, especially chatbots and companion apps aimed at younger users, to cover risks such as increased suicidal ideation, poor mental health and emotional manipulation.

“If a creepy old man pretends to be a girl and sends an email to an 11-year-old boy trying to convince the boy to commit suicide, he could be sent to prison for that,” Tegmark said. “We already have laws. It’s illegal. So why would it be any different if a machine does it?”

He believes that once the principles of pre-release testing are established for children’s products, its scope will almost inevitably expand. “People will come and say, let’s add some other requirements. Maybe we should also test that this doesn’t contribute to terrorist bioweapon production. Maybe we should test to make sure superintelligence agencies don’t have the ability to overthrow the U.S. government.”

It’s no small feat that former President Trump adviser Steve Bannon and President Obama’s national security adviser Susan Rice signed the same document, along with former Chairman of the Joint Chiefs of Staff Mike Mullen and progressive faith leaders.

“What they agree on, of course, is that they’re all humans,” Tegmark says. “When it comes to whether you want a human future or a machine future, of course they’re going to be on the same side.”