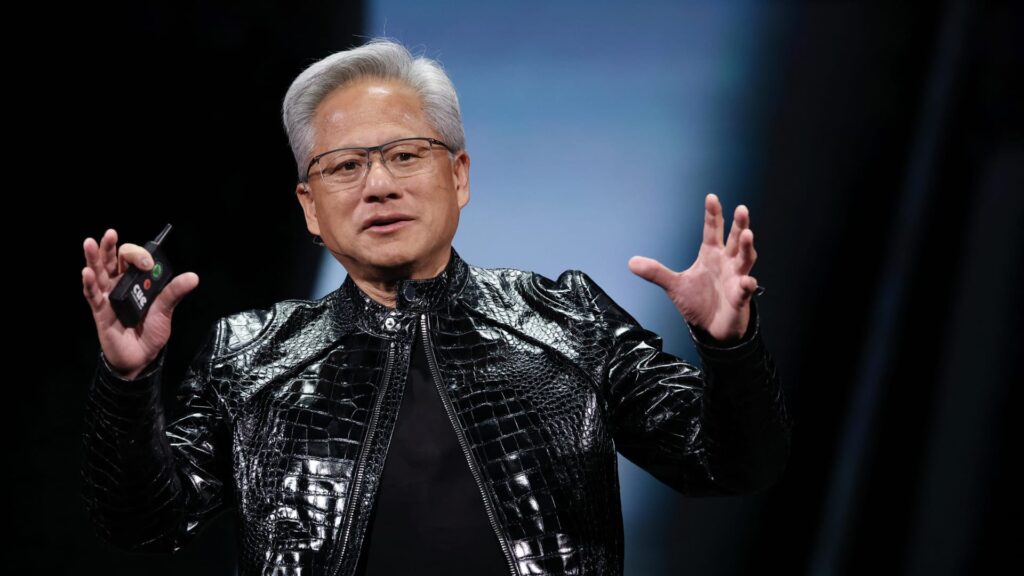

On the day before Christmas, when stocks were barely moving, an expensive and pivotal trade sent shockwaves through the AI computing race. NVIDIA was spending a reported $20 billion to license technology from chip startup Groq and hire key employees, including a CEO who had previously helped Google develop a major alternative to NVIDIA’s AI processors. In the months since then, Nvidia’s aggressive moves have likely flown under the radar given the competitive implications in the artificial intelligence gold rush. Perhaps it got lost in the Christmastime shuffle or in the flurry of other deals and investments that have flowed in from the world’s most valuable companies over the past year. That should change next week, as Nvidia holds its annual GTC event, known in its early days as the GPU Technology Conference, in San Jose, California. The four-day gathering is a big deal for AI. Held at the San Jose McEnery Convention Center, Nvidia CEO Jensen Huang’s Monday keynote was held at the nearby SAP Center, where the NHL’s San Jose Sharks play. It’s a venue befitting Mr. Jensen’s leather-jacketed rock star status. Throughout this week, Nvidia plans to share at least some of its vision for incorporating Groq’s chip technology into its already dominant AI computing ecosystem. “We have some great ideas that we want to share with you at GTC,” Jensen said during the chipmaker’s late February earnings call. The ideas appear to be among the notable developments at a conference dubbed the “Super Bowl of AI.” Nvidia is also expected to provide updates on its roadmap for its staple graphics processing units (GPUs), including the next-generation Vera Rubin family. Key reasons for the Groq conspiracy: Wall Steet analysts say NVIDIA is likely to leverage Groq’s technology to build an entirely new chip targeted at everyday use of AI models, a process known as inference. Inference is becoming a bigger and more competitive part of the AI computing picture. Additionally, it is a source of revenue for Nvidia’s data center customers. Nvidia’s GPUs are clear performance leaders in the training phase of AI computing, where models are fed large amounts of data in preparation for real-world use. Nvidia’s training dominance has fueled its meteoric rise in recent years. However, the inference market is becoming more crowded as AI adoption goes mainstream and customers look for cost-effective ways to meet burgeoning demand. Companies are basically trying to get their hands on every type of chip they can. Advanced Micro Devices, by far the No. 2 maker of GPUs, is feeling some traction in inference, recently signing Meta Platforms as a customer in a splashy partnership announcement. Meanwhile, the custom chip efforts of big tech companies, including Meta, are generally seen as targeting the inference market. Indeed, Google’s in-house Tensor Processing Units (TPUs) are a formidable challenger for both training and inference, and the new success of Google’s Gemini chatbot built on TPUs has strengthened its reputation as Nvidia’s biggest threat. Google is co-designing the TPU with Broadcom. Amazon is also touting its Trainium chip’s capabilities in both tasks. Anthropic, the AI startup behind the Claude model, uses Trainium. However, reflecting its pursuit of computing of all kinds, Anthropic is also using TPUs and signed a deal with Nvidia in the fall. Another competitor to be aware of is Cerebras, an AI startup preparing for an initial public offering. Oracle co-CEO Clay Magouyrk dropped Cerebras’ name for the first time during an earnings call earlier this week. Nvidia is not lazy in its reasoning. It may be a little old, but Nvidia revealed in 2024 that about 40% of its revenue will come from inference. At last year’s GTC, Jensen told analysts that “the majority of the world’s reasoning today is based on Nvidia.” Also, during Nvidia’s latest earnings call in late February, finance chief Colette Kress highlighted that industry publication SemiAnaracy recently “declared Nvidia king of inference,” noting that the current generation of Grace Blackwell GPUs offer significant performance improvements over the previous generation of Hopper. Where Groq fits Nvidia clearly saw an opportunity to improve what it brings to the inference table. Otherwise, they wouldn’t have spent the reported $20 billion on Groq’s technology and talent. Nvidia did not acquire the entire Groq company outright, perhaps to avoid antitrust scrutiny. The license agreement is billed as non-exclusive, and Groq continues to operate its inference cloud service, which runs on its proprietary chips (and, in case there was any confusion, the company has no connection to the other Grok, Elon Musk’s AI chatbot). However, some key players jumped on Nvidia on this deal. The most notable addition is Jonathan Ross, founder and now former CEO of Groq. Before starting Groq in 2016, Ross was part of the Google team that developed the original TPU. Ross currently holds the title of Chief Software Architect at Nvidia. Groq has developed and brought to market what it calls an inference-focused LPU, short for Language Processing Unit. In various podcast interviews over the years, Ross made it clear that Groq had no intention of competing with Nvidia in training. Instead, Groq saw inferential computing as a place where startups could innovate and carve a lane, he said. So Groq set out to develop a chip to run AI models that prioritized speed and efficiency at low cost. A key reason Nvidia’s GPUs excel at training AI models is their ability to perform large amounts of calculations at the same time, known as parallelism. AI models work to identify patterns within mountains of training data while maintaining simplicity. This requires many calculations to be performed simultaneously. This is why GPUs are better for AI training than traditional computer processors (CPUs), which perform tasks sequentially rather than in parallel. Now, another important characteristic of GPUs is their flexibility. This is primarily made possible by Nvidia’s CUDA software program. Jensen said CUDA (short for Computing Unified Device Architecture) allows GPUs to run all kinds of workloads, including inference. When an AI model is deployed for inference and receives a user prompt, the model essentially looks at all the patterns it has learned to decide part by part (token by token in AI terminology) what the most appropriate response should be. We are making decisions based on the probabilities of the training data. But fundamentally, there is a difference between training and inference computing, and each has a different set of attributes that are most desirable in a chip. Groq has designed a chip that excels in inference, especially real-time tasks where speed is paramount. Groq’s LPU uses a type of short-term memory known as SRAM. It sits directly on top of the chip’s engine and is the driving force behind its high speed. GPUs, on the other hand, use a type of short-term memory called high-bandwidth memory (HBM). It is placed right next to the GPU’s engine, not on top of it. The AI boom has created a shortage of HBM, driving up memory prices. “GPUs are very good at training models. When someone wants to train a model, I just say, ‘Use the GPU, don’t talk to us,'” Ross said in a podcast interview with wealth advisory firm Lumida in late 2023. “But the big difference is that when you run one of these models, rather than training the model, when you run it after it’s already been created, you can’t generate the 100th word until you’ve generated the 99th word,” he added. “So there’s a sequential component that you simply can’t get from a GPU. … It’s not just how many calculations you can complete in parallel, it’s how quickly you can complete them. And we do the calculations much faster.” But Ross said he believes Nvidia’s staple GPUs and Groq’s technology can complement each other. He made this clear in another interview on The Capital Markets podcast, dated February 2025, still many months before moving from Groq to Nvidia. “In fact, we’ve done a little bit of experimenting with taking parts of the model and running them on the LPU and running the rest on the GPU because they’re so fast compared to GPUs. And it really speeds up and makes GPUs more economical. So people are already deploying a lot of GPUs, so one of the use cases we looked at is selling some of the LPUs and doing a nitro boost, so to speak.” Groq and Ross That comment really jumped out at us when we came across this year-old interview in search of more insight. Hearing Ross say something like that long before joining Nvidia made us even more intrigued to hear Jensen’s vision next week. There are many possibilities with Nvidia hardware that incorporates Groq. Indeed, as AI advances, it makes sense that Nvidia would branch out into more specialized chips. History shows that the more advanced a particular technology becomes, the more specialized it becomes. Back on Nvidia’s February earnings call, Jensen said Nvidia was looking at Groq in the same way it looked at Mellanox, the network equipment provider it acquired six years ago. “What we’re going to do is extend Nvidia’s architecture in the same way that we extended it with Mellanox, using Groq as an accelerator,” Jensen said. The acquisition has aged like fine wine, as Nvidia’s networking capabilities have been a key element of its success in the AI boom, turning Nvidia from just a chip designer to a one-stop shop for AI computing. In the fourth quarter of fiscal 2026 alone, Nvidia’s network business generated approximately $11 billion in revenue. This is about the same as AMD’s overall revenue. Nvidia’s fourth-quarter companywide revenue exceeded expectations, increasing 73% year-over-year to $68.13 billion. Less than three years ago, Nvidia’s network revenue was running at about $10 billion in all 12 months. It now reaches $11 billion in just three months and is growing explosively along with GPU revenue. Investors can only hope that the Groq deal ends up being as successful as Mellanox. The journey to find out begins next week. (Jim Cramer’s Charitable Trust is long-lived with NVDA, GOOGL, META, AVGO, and AMZN. See here for a complete list of stocks.) As a subscriber to Jim Cramer’s CNBC Investing Club, you’ll receive trade alerts before Jim Cramer makes a trade. After Jim sends a trade alert, he waits 45 minutes before buying or selling stocks in his charitable trust’s portfolio. If Jim talks about a stock on CNBC TV, he will issue a trade alert and then wait 72 hours before executing the trade. The above investment club information is subject to our Terms of Use and Privacy Policy, along with our disclaimer. No fiduciary duties or obligations exist or arise from your receipt of information provided in connection with the Investment Club. No specific results or benefits are guaranteed.