When talking about the cost of AI infrastructure, the focus is usually on Nvidia and GPUs, but memory is becoming an increasingly important part of the picture. DRAM chip prices have jumped about seven times in the last year as hyperscalers prepare to build billions of dollars worth of new data centers.

At the same time, there is increased discipline in coordinating all memory to ensure the right data reaches the right agent at the right time. Companies that master this will be able to perform the same queries with fewer tokens, which could be the difference between going out of business and staying in business.

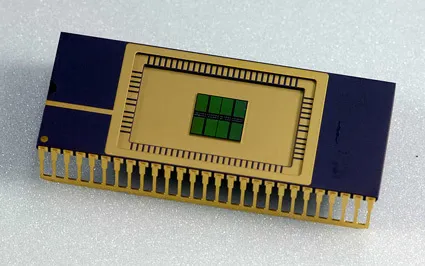

Semiconductor analyst Doug O’Loughlin speaks with Weka’s chief AI officer, Val Bercovitch, for an interesting look at the importance of memory chips in his substack. They are both semiconductor experts, so their focus is on chips rather than broader architectures. The impact on AI software is also very important.

I was especially struck by Bercovici’s discussion of the growing complexity of Anthropic’s prompt cache documentation:

You can find out by visiting Anthropic’s Prompt Cash pricing page. It started as a very simple page 6-7 months ago, especially when Claude Code was launching. They just said, “It’s cheaper if you use cash.” It’s now an encyclopedia of advice on exactly how many cache writes to buy in advance. There’s a 5-minute window, or a 1-hour window, that’s very common across the industry, and no more. That’s a really important announcement. Of course, you have all sorts of arbitrage opportunities regarding the pricing of cache reads based on the number of cache writes you have purchased upfront.

The question here is how long Claude keeps the prompt in cached memory. You can pay for a 5-minute window or even more for a 1-hour window. It’s much cheaper to utilize data that’s still in cache, so if you manage your data properly, you can save a lot of money. However, there is a catch. Every time you add new data to your query, something else may be pushed out of the cache window.

This is complex, but the conclusion is very simple. Memory management for AI models will be a big part of the future of AI. Companies that do this well will rise to the top.

And a lot of progress is being made in this new field. Back in October, I covered a startup called Tensormesh that was working on one layer in the stack known as cache optimization.

tech crunch event

boston, massachusetts

|

June 23, 2026

Opportunities also exist elsewhere in the stack. For example, lower down the stack is how data centers use the different types of memory they have. (The interview includes a nice discussion about when DRAM chips are used instead of HBM, but it’s pretty deep in the hardware weeds.) Higher up the stack, end users are figuring out how to configure their model suites to take advantage of shared cache.

As companies improve their memory orchestration, they use fewer tokens and the cost of inference becomes cheaper. On the other hand, the model is becoming more efficient at processing each token, further lowering the cost. As the cost of servers decreases, many applications that currently seem unfeasible will gradually begin to become profitable.