Nvidia CEO Jensen Huang said the company’s recent $30 billion investment in OpenAI “may be its last investment” in the artificial intelligence startup before it goes public towards the end of the year.

Huang said the opportunity to invest $100 billion in OpenAI, a figure the two companies touted in September as part of a large infrastructure deal, is probably “not in the cards.”

“The reason is because we’re going public,” Hwang said Wednesday at the Morgan Stanley Technology, Media and Telecom Conference.

He also noted that Nvidia’s $10 billion investment in OpenAI rival Anthropic will likely be its last. Nvidia previously announced plans to invest in Anthropic at the same time as the announcement. microsoft In November.

Huang’s comments come after months of speculation about the scope of Nvidia and OpenAI’s relationship. The company disclosed in its November quarterly report that a previously announced $100 billion deal may not materialize, and the Wall Street Journal reported in January that the deal was “on ice.”

Nvidia included similar language in its February quarterly report, noting that there is “no guarantee” that the company will “enter into an investment and collaboration agreement with OpenAI or that the transaction will be completed.”

The chipmaker’s $30 billion investment in OpenAI was revealed as part of a $110 billion funding round the company announced on Friday. The round also includes a $50 billion commitment from. Amazon and a $30 billion commitment from SoftBank.

OpenAI announced Friday that it has secured 3 gigawatts of dedicated inference capacity and 2 gigawatts of training capacity on Nvidia’s Vera Rubin systems for its AI data center as part of the agreement.

The companies’ September deal, which shook the tech industry and triggered a flurry of infrastructure deals that followed, outlined the structure for NVIDIA’s multi-year investment in OpenAI as it brings new supercomputing facilities online. In contrast, Nvidia’s $30 billion investment is not tied to any deployment milestones.

The chipmaker has been one of the biggest winners of the AI boom, as it makes the graphics processing units (GPUs) that AI companies need to train models and run large-scale workloads.

Still, the needs of AI companies have shifted from training to inference, a type of processing that allows AI models to quickly respond to user queries, which is putting some pressure on the company. Nvidia is reportedly developing a new chip dedicated to inference, and OpenAI is expected to be one of the new processor’s biggest customers.

OpenAI announced in February that it would sign up for a large-scale purchase of “dedicated inference power” from Nvidia. OpenAI has also invested heavily in inference-optimized chips from Amazon and uses Tensor Processing Units from Google.

OpenAI CEO Sam Altman is scheduled to speak at a Morgan Stanley conference on Thursday, according to a person familiar with the schedule who requested anonymity because the details are private.

—CNBC’s Katie Tarasov and Kate Rooney contributed to this report.

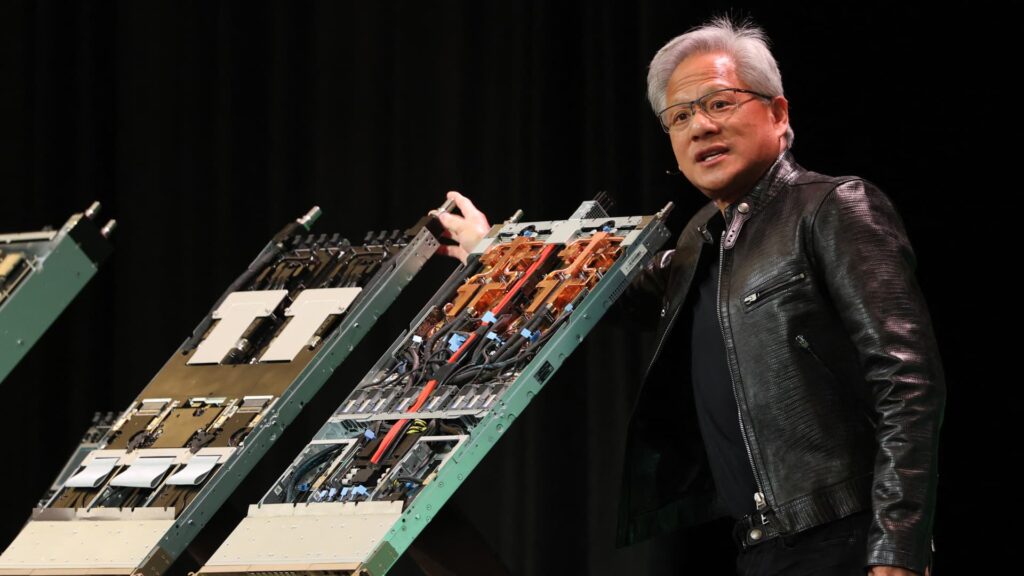

Spotlight: Nvidia CEO Jensen Huang: AI passes a new tipping point