For over 35 years, arm holdings licensed its instruction set to the world’s largest chip makers and collected royalties on every processor built with its design. The UK-based company is now producing its own physical silicon for the first time.

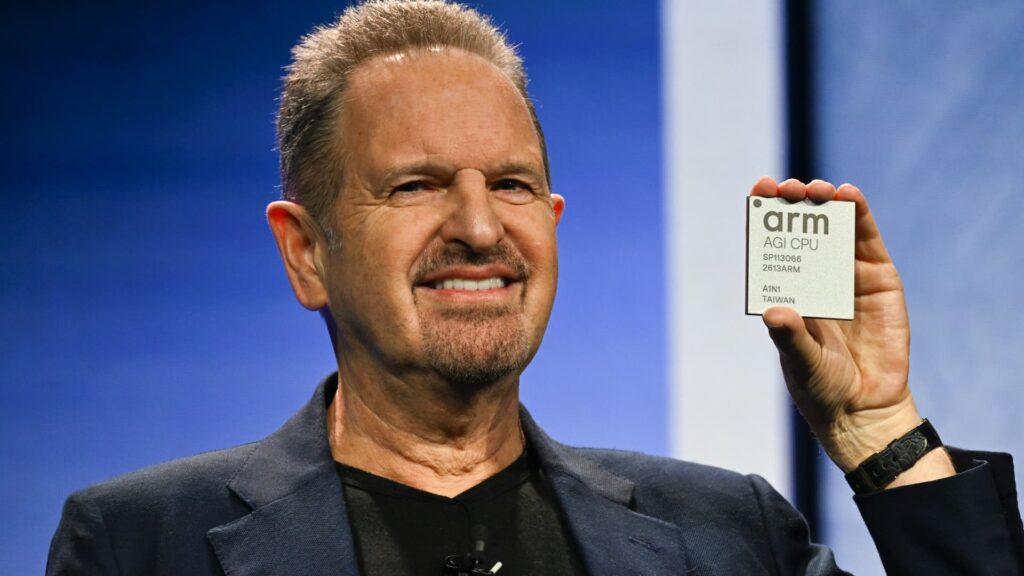

Arm CEO Rene Haas unveiled the company’s first in-house chip at an event in San Francisco on Tuesday. Arm calls its new data center central processing unit the AGI CPU. The move is a long-awaited move that marks a major change for the so-called semiconductor company as it enters new competition with customers.

meta Social media companies were the first to sign on as they build multi-gigawatt AI data centers and plan to spend up to $135 billion in capital this year. In February, Meta secured a significant amount of chips from both Nvidia and NVIDIA. advanced micro device.

“In today’s world, there are really only a few players,” Paul Saab, a meta-software engineer who has supported the Arm chip project since its inception in 2023, said in an interview with CNBC. “This adds yet another player to our ecosystem.”

Saab added that the partnership with Arm will “significantly increase the flexibility of our software stack and supply chain.”

Terms of the deal were not disclosed. For Arm, the deal is a major win and signifies recognition from one of the world’s most valuable companies.

“Let’s assume that 5% of Meta’s $115 billion to $135 billion capital investment is earned over time,” said Patrick Moorhead, a semiconductor analyst at Moore Insights. “This is a game changer for them on the top line.”

This is also the latest sign that demand for CPUs is recovering. Nvidia, which has established itself as a leader in AI graphics processing units, recently told CNBC that CPUs are “becoming the bottleneck” as agent-based AI changes computing needs. Futurum Group calls this a “silent supply crisis” and predicts that CPU market growth could outpace GPU growth by 2028.

GPUs are ideal for training and running AI models because they can perform many operations simultaneously on thousands of cores, while CPUs have fewer powerful cores that perform general-purpose tasks sequentially. Agentic AI moves large amounts of data between multiple agents, which requires a lot of general computing power.

At Nvidia’s annual GTC conference last week, CEO Jensen Huang unveiled an entire rack filled entirely with Vera CPUs. At Tuesday’s Arm event, Huang appeared in a recorded statement congratulating Arm’s new CPUs.

top leader of google, Amazon, microsoftoracle, broadcom, micron, samsung, SK Hynix and marvel It also appeared in the video. Arm told CNBC that about 50 partners pledged their support ahead of the launch.

“This is a trillion-dollar market, and what we’re seeing over and over again is that our partners are actually coming out and understanding and recognizing that this is actually a great thing for the industry,” Mohamed Awad, Arm’s head of cloud AI, said in an interview with CNBC.

CNBC has an exclusive first look at Arm’s new chip lab, which is preparing new CPUs for full-scale production later this year.

Mohamed Awey, Head of Cloud AI at Arm, gives CNBC’s Katie Tarasoff a tour of the chip lab in Austin, Texas where Arm made its first in-house chip, the AGI CPU, on Friday, March 6, 2026.

Erin Black | CNBC

“Create the chips you need”

Arm spent $71 million and about 18 months to build three new labs at its Austin, Texas, campus. Our once small team has grown to over 1,000 people. Inside the company, engineers “grow” chips that are shipped off the factory line through multiple tests.

Like nearly all fabless AI chipmakers, Arm currently Taiwan semiconductor manufacturing companymanufacturing factory. All Arm CPUs built on TSMC’s 3-nanometer node are currently manufactured in Taiwan. TSMC will soon build a 3nm fab in Arizona, and Awad said Arm “wants to manufacture here. It really depends on what the customer ultimately wants.”

Arm is best known as the primary architecture for the mobile chips found in nearly all smartphones. The company entered data center chips in 2018 with the launch of its Neoverse platform. Amazon captured the Neoverse mainstream with its first custom processor, Graviton, and now Google and Microsoft are also basing their AI chips on Arm.

“If Arm didn’t exist, all the companies that have their own processors wouldn’t be able to develop their own processors,” Moorhead said.

Still, most server chips are built on the traditional x86 architecture. intel and AMD. Moorhead says x86 is “tried and true” and “can do just about anything.”

The advantage of the Arm architecture is that the design is easy to customize and is “very efficient,” Moorhead said. “You can create the chips you need without doing anything else.”

Awad told CNBC that the Arm team has “relentlessly optimized” the new AGI CPU for artificial general intelligence, hence the name. Up to 64 new CPUs, approximately 8,700 total cores, can be housed in a single air-cooled rack. This is a high-density configuration that Arm is betting will appeal to power-constrained data center customers around the world.

“You get twice the performance per watt than an x86 rack,” says Awad. “This means twice the performance in the same footprint, same power.”

Meta’s Saab said wattage is “a very scarce resource.”

“If you have a best-in-class CPU that provides the best performance per watt possible, you can allocate more watts to other parts of your infrastructure,” he said.

Meta’s 5 GW Hyperion data center under construction in Richland Parish, Louisiana, January 9, 2026.

Courtesy of Meta

“Available worldwide”

Meta has a strong need for efficiency as it builds large AI data centers in Louisiana, Ohio, and Indiana. The company is also reportedly considering leasing space at the massive Stargate site in Texas, where OpenAI and Oracle have canceled plans to expand capacity by up to 10GW.

Meta’s significant investment in AI comes after its Llama 4 model was poorly received by developers last year.

“They fell behind,” Moorhead said. “They also recognized that we didn’t have enough computing power to do what we needed to do.”

In addition to securing processors from Nvidia and AMD, Meta announced in March four new chips in its proprietary Meta Training and Inference Accelerators product line, which it has been manufacturing since 2023. And now we’re adding Arm CPUs to the mix.

“This was essentially a complete replacement, a drop-in replacement for current computing CPUs, and it was intended to be transparent to developers,” Saab said.

Saab has been with Facebook since 2011, when the company launched the Open Computing Project, a consortium that now includes hundreds of member companies, including Arm and Nvidia, to work on open hardware designs to help reduce data center energy consumption and costs.

“Our first conversation with Arm was, ‘If we’re going to build this, we don’t want to keep this in-house,'” Saab said. “We’re not like a chip company that’s trying to build a sales channel to sell chips. We wanted to make chips available to the whole world.”

Arm hasn’t disclosed the price of the CPU, but Moorhead expects it to be in the thousands of dollars.

Awad told CNBC that it will be “competitively priced” with the aim of serving as an option for companies that cannot afford to manufacture their own processors in-house.

“It would take 1,000 engineers and a $500 million budget to develop this,” Moorhead said. “So there’s definitely a need in the market.”

WATCH: Inside Arm’s $71 million chip lab building the first-ever CPU