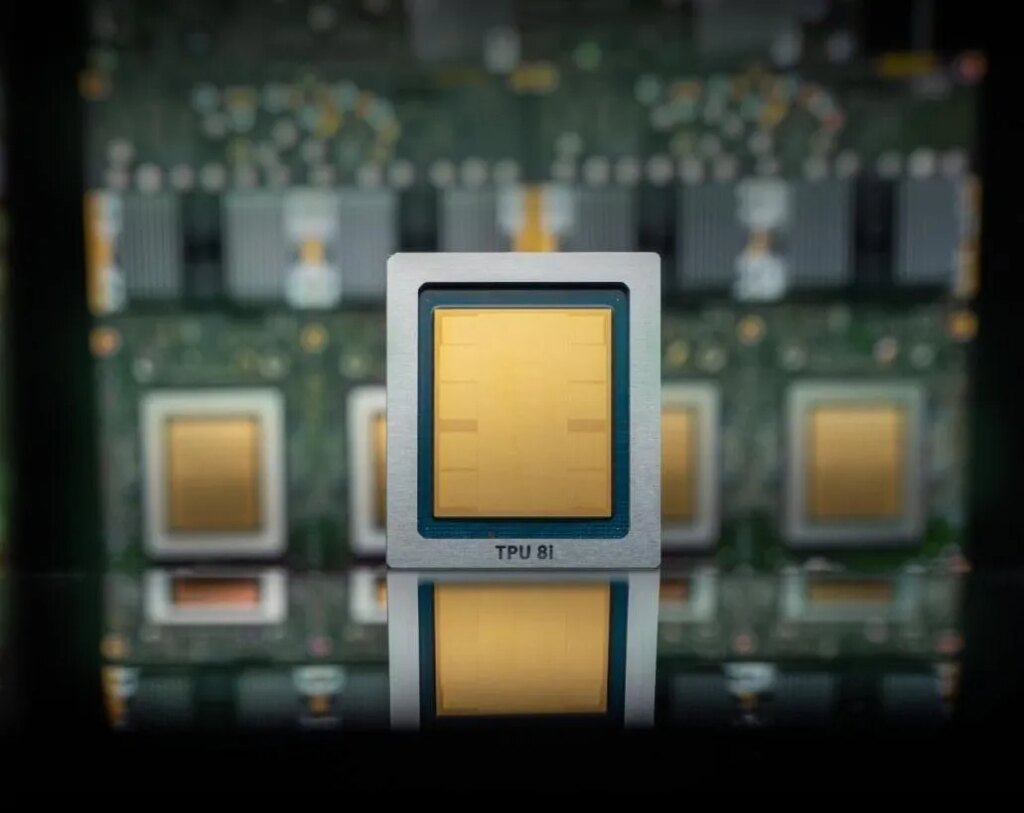

Google Cloud announced Wednesday that its 8th generation of custom-built AI chips, or tensor processing units (TPUs), will be split in two. One chip, named TPU 8t, is dedicated to model training, and the other chip, TPU 8i, is intended for inference.

Inference is the continued use of the model, what happens after the user submits the prompt.

As you might expect, the company is touting the superior performance specs of these new TPUs compared to the previous generation. This means you can train AI models up to 3x faster, get 80% more performance per dollar, and have over 1 million TPUs working together in a single cluster. The result should be much less energy and more compute at customer cost than previous versions. The company’s custom low-power chips were originally named Tensors, which is why we call them TPUs rather than GPUs.

But Google’s chips aren’t an all-out assault on Nvidia’s future, at least for now. Google, like other giant cloud providers such as Microsoft and Amazon, is using these chips to supplement the Nvidia-based systems it offers in its own infrastructure. It is not a complete replacement for Nvidia. In fact, Google is powering its cloud with Nvidia’s latest chip, Vera Rubin, which it promises will be available later this year.

Hyperscalers developing their own AI chips (like Amazon, Microsoft, and Google) may one day reduce the need for Nvidia as companies move their AI needs to their own clouds and port their apps to these chips.

Still, as it stands, it’s not profitable to bet on Nvidia. As noted chip market analyst Patrick Moorehead jokingly posted on X, back in 2016 when the search giant launched its first TPU, he predicted that Google’s TPU could be bad news for Nvidia (and Intel). Nvidia is now a company with a market capitalization of nearly $5 trillion, meaning this prediction hasn’t exactly stood the test of time.

If all goes as Nvidia plans, Google’s growth as an AI cloud provider will mean even more business for the chip maker, even though many workloads will run on Google’s chips.

tech crunch event

San Francisco, California

|

October 13-15, 2026

In fact, Google also said it has agreed to work with Nvidia to design computer networking that will allow Nvidia-based systems to run more efficiently in its cloud. In particular, the two tech giants are working to enhance a software-based networking technology called Falcon. Falcon was developed and open sourced by Google in 2023 under the Open Computing Project, the godfather of all open source data center hardware organizations.

If you buy through links in our articles, we may earn a small commission. This does not affect editorial independence.