Amazon has pulled off a major coup with Meta thanks to Amazon’s own chip. Meta has signed a deal to use millions of AWS Graviton chips to power its growing AI needs, Amazon announced Friday.

Note that AWS Graviton is not a GPU (graphics processing unit), but an ARM-based CPU (central processing unit, a chip that handles common computing tasks).

GPUs remain the chip of choice for training large models, but once those models are trained, the AI agents built on top of them are changing the types of chips needed. Agents create compute-intensive workloads such as real-time inference, code writing, search, and coordination required to manage agents through multi-step tasks. AWS’ latest version of Graviton is specifically designed to address AI-related computing needs, the company says.

The deal puts much of Meta’s cache back into AWS rather than competitors like Google Cloud. Last August, Meta signed a six-year, $10 billion deal with Google Cloud, but up until then Meta had primarily been an AWS customer, and also used Microsoft Azure.

We couldn’t help but notice that AWS announced this deal just as the Google Cloud Next conference was ending, in what was effectively a smirk on its cloud rivals. Of course, Google also makes its own custom AI chips, and announced a new version of it at the show.

Indeed, Amazon also manufactures its own AI GPUs. Trainium, despite its name, is used for both training and inference. Occurs when the model is actively processing a prompt after it has been trained.

But Anthropic was already swooping in with a deal announced earlier this month that would seize many of those chips over the next few years. The maker of Claude agreed to spend $100 billion over 10 years to run workloads on AWS, with a particular focus on Trainium, while Amazon agreed to invest an additional $5 billion in Anthropic in return (for a total investment of $13 billion).

tech crunch event

San Francisco, California

|

October 13-15, 2026

Ultimately, the meta deal allows Amazon to introduce its huge AI customers as proof points for its CPUs. These are chips that compete with Nvidia’s new Vera CPUs, which are also ARM-based and designed to handle AI agent workloads. The difference, of course, is that Nvidia sells its chips and AI systems to enterprises and cloud providers (including AWS). AWS sells access to chips only through its cloud services.

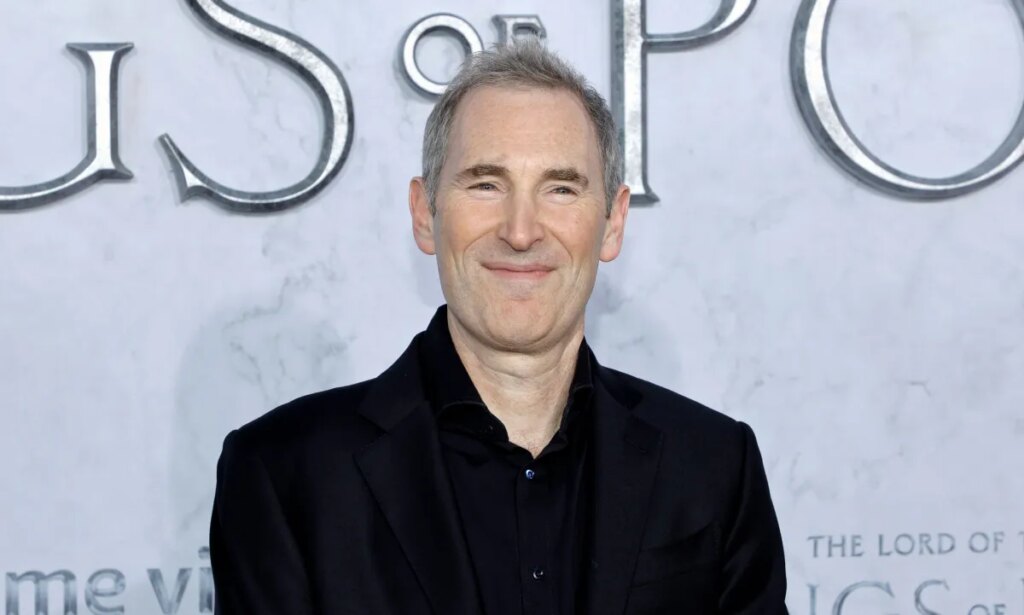

Earlier this month, Amazon CEO Andy Jassy criticized Nvidia and Intel in his annual shareholder letter, saying the companies want to improve the price-performance ratio of AI and will win deals on that basis. It also means even more pressure is being put on Amazon’s internal chip manufacturing teams, the ones we visited last month on an exclusive tour of the lab.

If you buy through links in our articles, we may earn a small commission. This does not affect editorial independence.