For the first 30 years, Nvidia It wasn’t a household name unless you were a gamer. Now, as artificial intelligence has turned the chipmaker into the world’s most valuable company, some of its original fan base feels left behind.

“The gaming division is no longer the driving force of the company, and there was clearly a time when that was the case,” said Stacey Rasgon of Bernstein Research.

Nvidia popularized graphics processing units (GPUs) that enable fast frame rates and rendering for the best video game play.

When Nvidia released its first GPU, the GeForce 256, in 1999, it laid off most of its employees and nearly went bankrupt to make it happen. Gamers picked up this new type of processor and brought Nvidia back from the brink.

As demand for AI skyrockets, nearly all of Nvidia’s revenue comes from products that serve that industry, not games. And as AI chip manufacturing shrinks the supply of available memory, Nvidia is having to make tough decisions about its priorities.

In a memory-constrained reality, it’s no surprise that Nvidia would prioritize much more profitable data center GPUs like Hopper and Blackwell.

Nvidia’s computing and networking division has averaged operating margins of 69% over the past three years, while its consumer graphics division has had operating margins of 40%.

“I understand that they’re trying to go after it, and it breaks my heart,” Greg Miller, co-founder and host of the popular video game podcast Kinda Funny Games Daily, said in an interview with CNBC.

“Dance with the people who brought you here. Gamers brought you this far,” Miller added.

If analysts’ predictions are correct, 2026 will be the first year in 30 years that Nvidia won’t release a new generation of its GeForce series of consumer graphics processing units.

Gamers are “very important” to NVIDIA, the company said in an email to CNBC, adding that it is “constantly innovating, testing, and releasing” new technology specifically for gaming.

The current RTX 50 series of GeForce GPUs was announced at CES in January 2025.

But with 2026’s CES and GTC in the rearview mirror, some are worried that while NVIDIA typically announces new hardware in late September, this year will be the first without a new generation.

This is a major shift in strategy, but some gamers say it’s not a bad move given the budget.

“It’s kind of hard to keep up. You can’t upgrade every year, so I think it would actually help gamers to take a little bit of a break and wait for a really important generation,” said Miller’s co-founder Tim Gettys of Kinda Funny Games.

AI profits will be inherited

Nvidia’s current era of AI dominance began 20 years ago with the launch of its CUDA software toolkit in 2006. Suddenly, developers could use GPUs for general-purpose computing, not just graphics.

And in 2012, during what many consider the big bang moment for modern AI, Nvidia’s deep learning capabilities were unveiled. Nvidia’s GPUs and CUDA were used to build a neural engine called AlexNet that blew away its competitors in a prestigious image recognition competition.

Nvidia didn’t stop making gaming GPUs, but in 2020 it acquired high-performance computing chip maker Mellanox Technologies for $7 billion, signaling a new focus on AI GPUs.

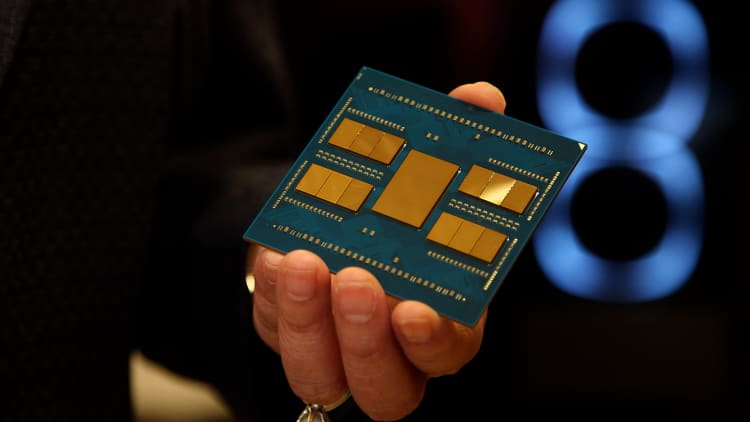

The company has since continued to release new generations of high-end GPUs, as well as full rack-scale systems for AI workloads, including the new Vera Rubin platform, which CNBC exclusively premiered in February.

Nvidia hasn’t disclosed pricing for its AI chips, but analysts estimate that a single Blackwell GPU could cost up to $40,000, and Futurum Group estimates a complete Vera Rubin system could cost up to $4 million.

In contrast, Nvidia sells its RTX 50 series gaming GPUs for between $299 and $1,999.

During the cryptocurrency peaks of 2018 and 2021, Nvidia’s GPUs, once the key to Bitcoin and Ethereum mining, were sold on online marketplaces for up to three times their list price.

Although mining has changed direction in 2022 and prices have dropped, Nvidia’s current RTX 5090 GPUs are still selling online for up to twice the retail price.

The high demand for last year’s generation may make NVIDIA less willing to release new versions this year.

“Memory is difficult to retain”

However, lack of memory is more likely the cause of Nvidia’s gaming shortcomings.

NVIDIA plans to cut production of its latest gaming GPUs by up to 40% as it faces a significant shortage of general-purpose memory needed to manufacture GPUs, according to industry reports.

Dynamic random access memory (DRAM) enables fast temporary data storage and allows GPUs to perform parallel tasks.

Personal computers equipped with Nvidia’s gaming GPUs are bearing the brunt of the DRAM shortage. As the price of memory increases, the cost of manufacturing GPUs increases, which is passed on to consumers.

Gartner predicts that PC prices will rise 17% this year, resulting in a 10.4% decline in PC shipments.

“All of this has become so expensive that it’s alarming to see gaming-related prices go up and show no signs of coming down, and then NVIDIA is clearly going after a completely different category of consumers,” Gettis said.

If the entry-level consumer PC market disappears by 2028, as Gartner predicts, the market for Nvidia’s entry-level gaming GPUs may also shrink.

Instead, Nvidia may be saving its limited memory inventory for higher-cost, higher-margin AI chips.

“If there are postponements or delays in the game’s roadmap, it’s probably mainly because the cards can’t be made anyway because memory is hard to come by,” Rasgon said. “I think any memory that is out there will be prioritized for AI computations.”

High-performance GPUs like Blackwell and Rubin are lined with dense stacks of a specific type of DRAM known as high-bandwidth memory (HBM). Rasgon said producing 1 gigabyte of HBM requires about four times the silicon wafers needed to produce the same amount of conventional DRAM.

“With this move, the entire industry is running out of the kind of memory that has traditionally been used for more consumer applications. It’s just not available,” Rasgon said.

Nvidia told CNBC that it continues to ship all GeForce GPUs in anticipation of strong demand and is working closely with suppliers to maximize memory availability.

“If they’re making three times as much money and the shareholders are three times as happy, I think they’re going to give up on the game, even though that’s what got them there,” Gettys said.

“It feels like a slap in the face.”

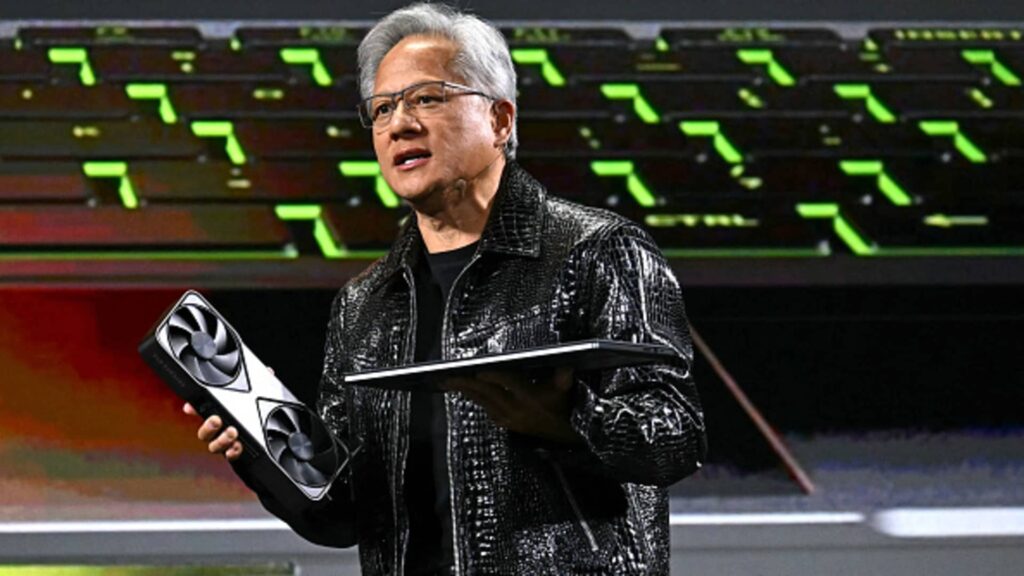

CEO Jensen Huang made a big gaming announcement at the beginning of his keynote at Nvidia’s annual GTC conference in March, but the gaming community wasn’t all that enthusiastic.

Huang announced that next-generation rendering software called Deep Learning Super Sampling (DLSS) will be released in the fall. It is best known for rendering games at lower resolutions and using AI to scale up images to improve frame rates and allow games to run more smoothly even on less powerful hardware.

The controversy surrounding the new DLSS 5 is that gamers are concerned that generative AI is being used to change the look of the game. Huang announced DLSS 5, which features intense reels of photorealistically enhanced versions of characters from popular games such as Resident Evil Requiem, Starfield, and Hogwarts Legacy.

“I play video games because they’re an art form, so I like to see the creator’s thumbprint on what I’m doing,” said Kinda Funny Games’ Miller. “It’s created a huge headache for many in the video game industry as we are dealing with so many layoffs and studio closures.”

Nvidia announced DLSS 5 at GTC on March 16, 2026, causing an uproar among gamers when it said its new Deep Learning Super Sampling rendering software used the generated AI to change the art of popular video game characters like Resident Evil Requiem’s Grace Ashcroft.

Nvidia

As the gaming industry grapples with the post-pandemic economic downturn, major companies like Epic Games, Microsoft’s Xbox and Sony’s PlayStation have shuttered studios, canceled games and laid off thousands of jobs.

Gettys was a fan of earlier versions of Nvidia’s DLSS, which made games more accessible on a budget.

“This technology is amazing in that it allows you to run games on low-end PCs,” he said. “But when you add this generative AI capability, it feels like a slap in the face.”

Gettys’ biggest concern is that this is a step toward fully AI-generated games, which he believes is “100% the goal.”

Elon Musk has already mentioned the possibility. In an October post about X, Musk said his xAI game studio would release “amazing AI-generated games” by the end of 2026.

“You’re literally altering the art that the developer created, and at some point you’re replacing the developer, and then their studio is shut down,” Gettis said.

“Games are a creative art form that gives developers the opportunity to tell compelling stories and immerse players in amazing worlds,” Nvidia said in a statement to CNBC. “Our RTX technology is a tool that allows game developers to realize their creative visions. 5 and other AI-enhanced technologies, all working together to deliver the best performance and image quality.

In his GTC keynote, Huang said AI will “revolutionize the way computer graphics is done.”

In a question-and-answer session the next day, Huang responded to claims from the gaming community that DLSS 5 will make games look more uniform.

“They’re completely wrong,” Huang said.

He emphasized that game developers will continue to be in control and can “fine-tune the generation AI” to suit their own style.

“Clear Favorites”

For more than a decade, Nvidia has also offered gaming in the cloud through a service called GeForce NOW. This model has evolved to include various subscription tiers, including a free option, allowing users to stream the games they own on services like Steam running on Nvidia GPUs in the data center rather than on personal devices.

“If you look at XBox or PlayStation, you see other competitors trying to put the cloud into the hands of gamers in a really meaningful way, and Nvidia GeForce NOW has really cracked the code on that,” Miller said.

Gettys told CNBC that NVIDIA’s streaming platform is “by far” the best.

“This will give millions more people access to the highest level of gaming, even if they don’t have the latest cards or anything like that. And this is really great technology,” he said.

advanced micro device is Nvidia’s top competitor in the gaming space with its Radeon series of GPUs.

However, memory deficits remain a challenge for both.

“If Nvidia can’t get the memory, AMD isn’t going to get the memory,” Rasgon said. “Emotionally speaking, both brands have fans, and they can be rabid fans.”

“There’s a clear favorite,” Gettis said. “If you want to play on PC, you’ll need an Nvidia card.”

Watch: How AMD became a chip giant and eventually captured Intel